ImageBind Reviews and Details

This page is designed to help you find out whether ImageBind is good and if it is the right choice for you.

Screenshots and images

Is ImageBind good?

External links

We have collected here some useful links to help you find out if ImageBind is good.

-

Check the traffic stats of ImageBind on SimilarWeb. The key metrics to look for are: monthly visits, average visit duration, pages per visit, and traffic by country. Moreoever, check the traffic sources. For example "Direct" traffic is a good sign.

-

Check the "Domain Rating" of ImageBind on Ahrefs. The domain rating is a measure of the strength of a website's backlink profile on a scale from 0 to 100. It shows the strength of ImageBind's backlink profile compared to the other websites. In most cases a domain rating of 60+ is considered good and 70+ is considered very good.

-

Check the "Domain Authority" of ImageBind on MOZ. A website's domain authority (DA) is a search engine ranking score that predicts how well a website will rank on search engine result pages (SERPs). It is based on a 100-point logarithmic scale, with higher scores corresponding to a greater likelihood of ranking. This is another useful metric to check if a website is good.

-

The latest comments about ImageBind on Reddit. This can help you find out how popualr the product is and what people think about it.

Social recommendations and mentions

-

Building with Generative AI: Lessons from 5 Projects Part 2: Embedding

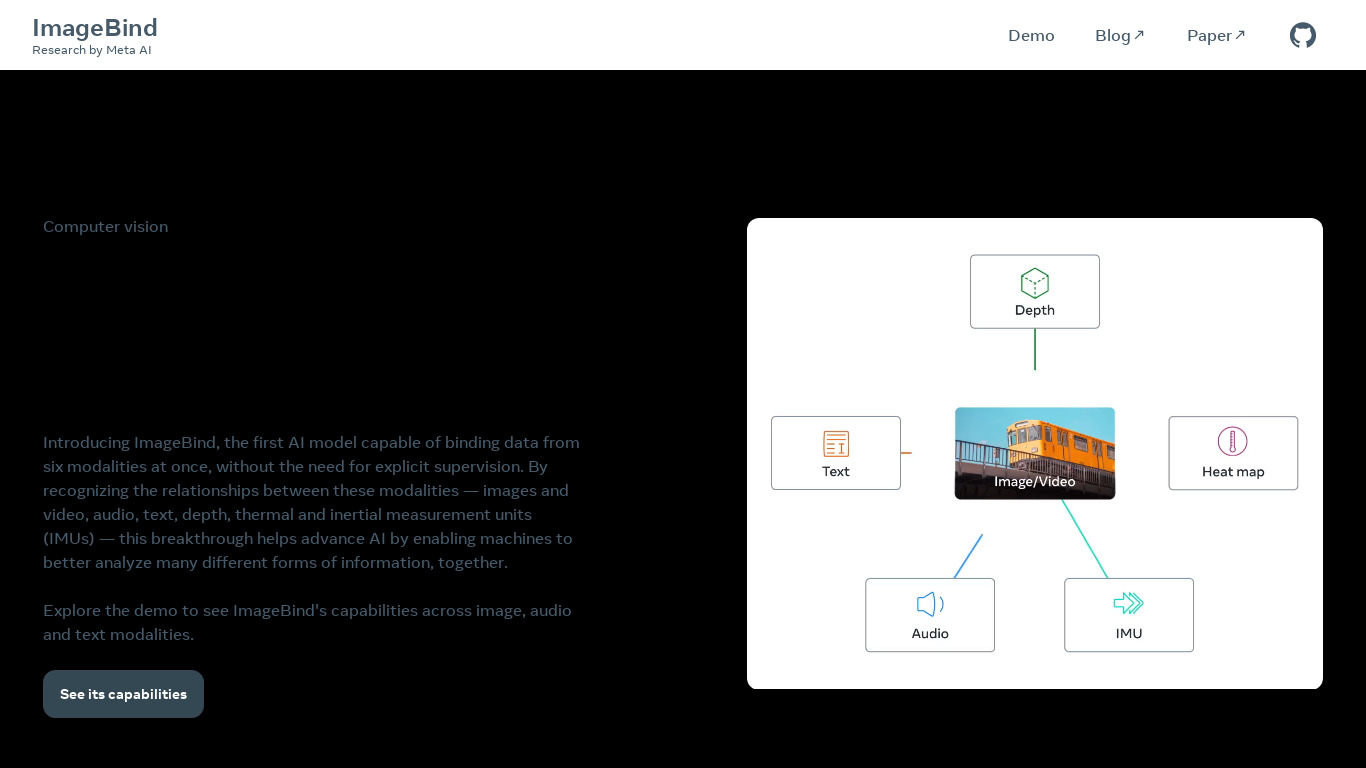

Another multi modal embedding is ImageBind from Meta, which supports text, images, and audio. - Source: dev.to / about 2 months ago

-

A Lightweight HuggingGPT Implementation w/ Langchain + Thoughts on Why JARVIS Fails to Deliver

In the approach described above, the main difference between the candidate models is their input/output modality. When can we expect to unify these models into one? The next-generation “AI power-up” for LLM Agents is a single multimodal model capable of following instructions across any input/output types. Combined with web search and REPL integrations, this would make for a rather “advanced AI”, and research in... Source: over 2 years ago

-

This Week in AI (5/14/23): US Army wants AI, Google ups their game, and the music wars continue

Google and OpenAI are increasingly restrictive on the research they share, but Meta is taking a different approach. This week: Meta released ImageBind, an AI model capable of “learning” from six different modalities, including depth, thermal, and inertia. Source: over 2 years ago

Do you know an article comparing ImageBind to other products?

Suggest a link to a post with product alternatives.

ImageBind discussion

Is ImageBind good? This is an informative page that will help you find out. Moreover, you can review and discuss ImageBind here. The primary details have not been verified within the last quarter, and they might be outdated. If you think we are missing something, please use the means on this page to comment or suggest changes. All reviews and comments are highly encouranged and appreciated as they help everyone in the community to make an informed choice. Please always be kind and objective when evaluating a product and sharing your opinion.